Dear Gini:

My company’s “powers that be” still make many decisions based on intuition rather than on evidence supported by analytics and strategic approaches no matter how important those decisions might be. The decision-makers rely on intuition because of assumed relationships between data elements and risk or poorly understood risk versus reward trade-offs. We have scores and all the tools to implement, but management is stuck in the past. Do you have any suggestions on how to move them a tiny bit into the 21st century?

P.S. Management believes it’s in fact using human intelligence to make better decisions.

Sincerely,

Strategy Stan

——————-

Dear Strategy Stan:

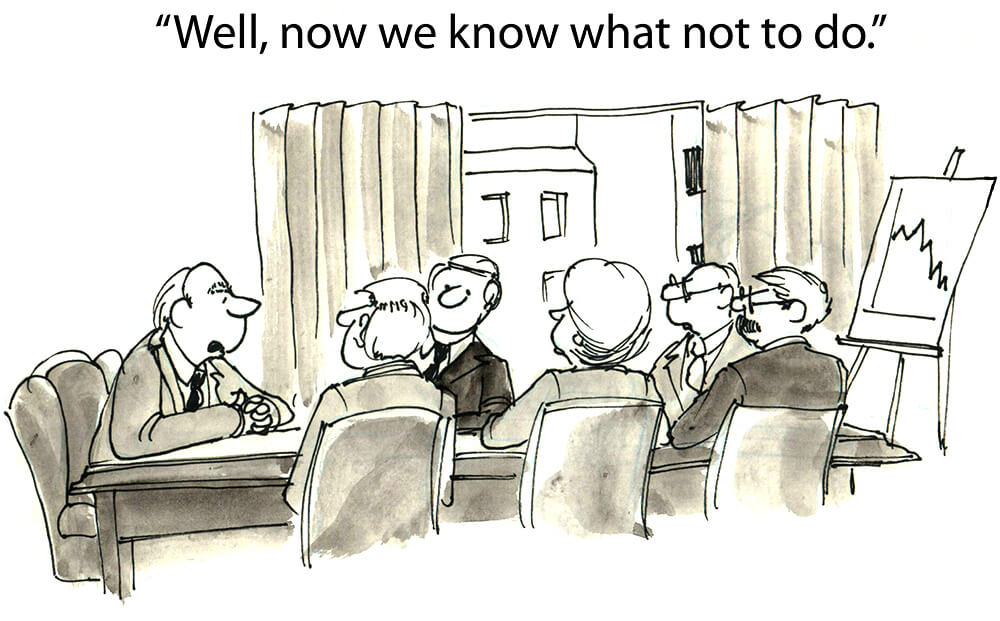

Determining which is more accurate – human intelligence and intuition or analytics – has been tested time and again over the years. SPOILER ALERT! Analytics always wins! Why? Webster’s dictionary defines intuition as “the power of knowing and without conscious reasoning.” Members of management often believe their gut beliefs are right without consciously applying the reasoning and analytical skills we hope they have. So, they end up second guessing empirical models. Maybe they do so out of hubris, pride, or misguided goodwill, but you’re right to want to bring your company into the 21st century.

In formal, controlled experiments where scoring model decisions and intuition-based decisions were compared and tracked in a true horse race, the results show there is no marginal value in using intuition on top of score. In fact, using intuition often has a negative impact.

To show your leadership that analytics are more accurate than intuition, convince them to conduct a true cross study. Let a specific number of accounts be booked or rejected based solely on the scores your company has. For each account, the intuitive decision should also be recorded along with the reason for their booking and rejection. You would need to wait a sufficient period of time to allow all accounts to perform before determining the results. (I know waiting is so hard, but it’s a necessary evil.) Once the waiting period is over, analyze the results. See how the accounts performed. Now, you won’t have data on those that the score rejected but credit managers would have approved, but you have the reverse.

Here’s what Gini predicts the results are. Drumroll, please! The accounts that the intuitive decision-makers wanted to declined turned out to be good risks. Aren’t you glad you trusted in your scoring model? I’m willing to bet the ones that would have been approved as a result of intuitive thinking instead of rejected because of score would have showed their true colors by underperforming.

Good luck,

Gini

Ask Gini Terms

Content provided in this blog is for entertainment purposes only. Ask Gini blogs do not reflect the opinion of BankersLab. BankersLab makes no representations as to the accuracy or completeness of information in this blog. BankersLab is not liable for any errors or omissions in this information nor for the availability of this information. These terms and conditions of use are subject to change at anytime and without notice.